Welcome to a look Inside The Holocron. A collection of articles from the archives of *starwars.com no longer directly available.

(*Archived here with Permission utilising The Internet Archive Wayback Machine)

Digital Capture and Release

January 17, 2002 – Digital Capture

Moviegoers got their first true look at the capabilities of the revolutionary HD system used in Episode II when two theatrical teaser trailers for Attack of the Clones were screened to the public last November. The announcement that Episode II would be shot without a foot of film raised a lot of questions; the trailers’ release answered many of them. Digital not only streamlines processes behind the camera, but also delivers results in front of a hushed audience.

Moviegoers got their first true look at the capabilities of the revolutionary HD system used in Episode II when two theatrical teaser trailers for Attack of the Clones were screened to the public last November. The announcement that Episode II would be shot without a foot of film raised a lot of questions; the trailers’ release answered many of them. Digital not only streamlines processes behind the camera, but also delivers results in front of a hushed audience.

To average moviegoers, the technical details of image acquisition, color-timing and image output are the farthest thing from their minds as they become engrossed in cinematic storytelling. To ILM’s HD Supervisor Fred Meyers, however, they are of prime concern. His work does better serve the story in the end, as the common distractions of the film medium — scratches, pops, reel changes, mismatched colors — increasingly become a thing of the past.

“It’s amazing with this picture,” says Meyers. “You’ve got the tools to blend it all together, so it’s really easy to slip away and immerse yourself in the story that’s on the screen. A lot of the things that we’re used to having to live with are things that can be removed with these new tools. In the past, maybe a scene that didn’t quite match correctly might break your train of thought and pull you out of the story.”

“It’s amazing with this picture,” says Meyers. “You’ve got the tools to blend it all together, so it’s really easy to slip away and immerse yourself in the story that’s on the screen. A lot of the things that we’re used to having to live with are things that can be removed with these new tools. In the past, maybe a scene that didn’t quite match correctly might break your train of thought and pull you out of the story.”

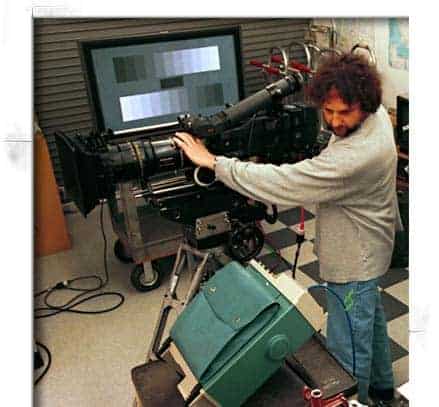

Meyers’ involvement with Episode II started on the image acquisition side — getting a reliable method of capturing images in a digital medium. His role has now expanded to post-production. “I was tasked with engineering the digital HD camera systems for all the principal photography,” explains Meyers. “Now, that has translated back into ILM, with miniature, motion control, blue and greenscreen elements that we’ve shot. We’ve taken all the technology and the camera systems, done some enhancements to them from last summer, and translated that into the photography here at ILM.”

Now gathering images of miniatures of effects elements at ILM is a newer version of the HD camera. “It’s slightly smaller and more appropriate to mount on the special effects motion control system. It also involves newer technology, such as a fiber optic interface with the recording system we use,” says Meyers. “I wouldn’t say they’re the next generation, but they’re the next in line. They have additional feature sets for application in the studio here.”

Now gathering images of miniatures of effects elements at ILM is a newer version of the HD camera. “It’s slightly smaller and more appropriate to mount on the special effects motion control system. It also involves newer technology, such as a fiber optic interface with the recording system we use,” says Meyers. “I wouldn’t say they’re the next generation, but they’re the next in line. They have additional feature sets for application in the studio here.”

In traditional film, effects artists developed a formula for adjusting the speed of the camera to convey the proper sense of scale in the final image. Large objects or environments tend to have slower, more ponderous motion. To simulate that, the film was over-cranked at a higher speed so that when projected back at a regular 24 frames-per-second, the motion was slowed down.

The HD camera shot at a consistent 24 frames-per-second rate. To simulate speed effects, ILM turned to software solutions. “We’ve pulled a whole bunch of tricks out of the CG graphics realm that have been used to simulate both high and slow speed photography,” explains Meyers. “We have applied those to the HD cameras in a way that allows us to take individual frames and manipulate them, combine them, or skip frames out in a way that simulates longer exposures that would have been done traditionally with film cameras and motion control. We’ve done that in a way such that we get the motion blur, and we get the equivalent of increased or longer exposure times. ”

An example of this effect is visible in the Forbidden Love trailer; a shot of Padmé and Anakin captured in real-time was slowed down for effect. “The capabilities of the HD cameras are such that it’s a very equivalent simulation,” says Meyers. “You wouldn’t be able to tell the difference in motion because it simulates exactly what would be happening on the longer exposures of a film camera.”

An example of this effect is visible in the Forbidden Love trailer; a shot of Padmé and Anakin captured in real-time was slowed down for effect. “The capabilities of the HD cameras are such that it’s a very equivalent simulation,” says Meyers. “You wouldn’t be able to tell the difference in motion because it simulates exactly what would be happening on the longer exposures of a film camera.”

Digital Release

The image quality of Episode II is now far more malleable than could ever have been achieved with traditional film. As the movie continues down the postproduction pipeline, ILM is blazing new territory by becoming a digital lab, handling concerns that would previously been taken care of in photochemical laboratories.

“The lab aspect is not something that ILM would consider a core business component, but since this project has been breaking a lot of barriers, both from the acquisition and now on the delivery side, we didn’t have anyplace to go to,” says Meyers. “So in order to pull this off ,we basically built it. Our thinking was that if we build this, demonstrate it, and people see the results with Episode II, that it would hopefully pave the way to a complete digital path for making pictures.”

“The lab aspect is not something that ILM would consider a core business component, but since this project has been breaking a lot of barriers, both from the acquisition and now on the delivery side, we didn’t have anyplace to go to,” says Meyers. “So in order to pull this off ,we basically built it. Our thinking was that if we build this, demonstrate it, and people see the results with Episode II, that it would hopefully pave the way to a complete digital path for making pictures.”

Tests of delivering an HD image onto film stock for projection have been underway for years, but the theatrical release of the teaser trailer marked a crucial benchmark for the production. It was the first time that the digital images of Episode II would be put to film in full resolution.

“When we came up to the trailer stage, we put together the whole digital delivery, which replaced the traditional lab process of color-timing and interpositive and internegative printing steps. We recorded a printing negative to film that would be the equivalent of what directors and producers usually only have one or maybe two copies of — an answer print. That is what we made multiple copies of, so everything was the first generation print from film.”

“When we came up to the trailer stage, we put together the whole digital delivery, which replaced the traditional lab process of color-timing and interpositive and internegative printing steps. We recorded a printing negative to film that would be the equivalent of what directors and producers usually only have one or maybe two copies of — an answer print. That is what we made multiple copies of, so everything was the first generation print from film.”

Working from such a pure source will ensure a consistency to image quality not found through traditional means. “The advantage of this digital arena is that we can make one master that reflects the film that we’ve made and then make thousands and thousands of exact copies of that,” says Producer Rick McCallum. “All we’re trying to do is give the audience an opportunity to see and hear the film the way we made it.”

Working from such a pure source will ensure a consistency to image quality not found through traditional means. “The advantage of this digital arena is that we can make one master that reflects the film that we’ve made and then make thousands and thousands of exact copies of that,” says Producer Rick McCallum. “All we’re trying to do is give the audience an opportunity to see and hear the film the way we made it.”

“This is a real-time digital lab that includes the color-timing components, manipulation components, and far more capabilities than would be available in a normal lab,” says Meyers. “The teaser trailer was the first time when we had a real-time, full resolution RGB component system all the way to the film recorder. The film recorders don’t run real-time, but the process of creating the master files that get recorded to the film recorder is real-time. It’s interactive too, so that any changes that need to be made can be done right there. The ability to see your final material projected with the changes real-time is also brand new.”

Meyers has been involved in breakthroughs in both the pre- and post-production ends of the spectrum, watching as old methods gave way to the new. “Both the front and the back end of this whole process have been incredibly exciting and challenging to me,” says Meyers. “I think having the opportunity to put the digital acquisition into production photography is just amazing. It’s great to now have that flexibility; if George Lucas or one of the effects supervisors asks for something, to be able to say, ‘no problem, we can do that now’ is great. It’s amazing to know that it’s here now, you can do it, and that we’re doing it.”

Meyers has been involved in breakthroughs in both the pre- and post-production ends of the spectrum, watching as old methods gave way to the new. “Both the front and the back end of this whole process have been incredibly exciting and challenging to me,” says Meyers. “I think having the opportunity to put the digital acquisition into production photography is just amazing. It’s great to now have that flexibility; if George Lucas or one of the effects supervisors asks for something, to be able to say, ‘no problem, we can do that now’ is great. It’s amazing to know that it’s here now, you can do it, and that we’re doing it.”